Using Motion Controllers in AR

In this demo, we’ll make your right hand carry around a 3D cube, and turn your left hand into a flashlight.

This tutorial assumes you’ve finished our Getting Started guide. You can use either an Oculus Rift or HTC Vive. You will have to set up your headset’s tracking base stations as, unlike the ZED Mini on your headset, the controllers have no means of tracking their position.

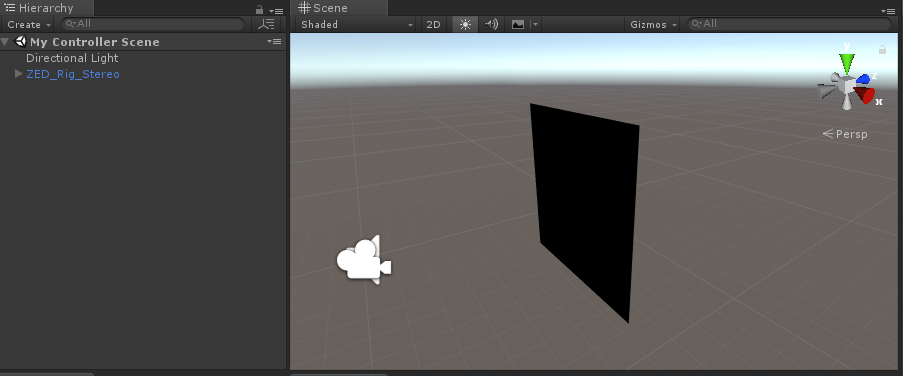

Starting Your Project #

Start a new scene, import the ZED plugin, delete the MainCamera and drag ZED_Rig_Stereo into the Hierarchy.

Tracking the Controllers #

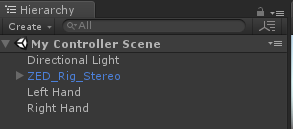

Create two new empty GameObjects in the Hierarchy. Name one “Left Hand” and the other “Right Hand”.

Add a ZEDControllerTracker component to each one.

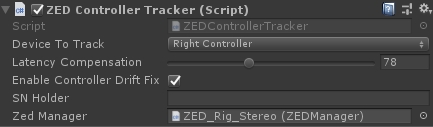

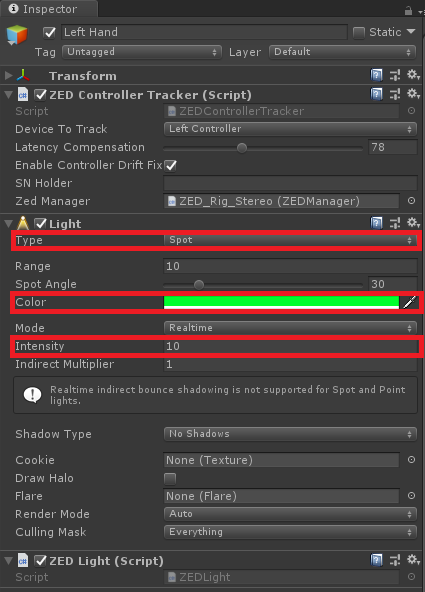

Set the Device to Track field to “Left Controller” on the Left Hand object, and to “Right Controller” on the Right Hand object.

Drag and drop the ZED_Rig_Stereo object into the Zed Manager field. This allows the script to compensate for drift when positioning the controllers.

The ZEDControllerTracker component will move these objects to match where your controller is, with a slight delay to compensate for the ZED image’s latency.

Augmenting Your Hands #

Unlike VR, it’s not recommended to put an object in your user’s actual hand. Doing so makes any tracking errors extremely noticeable, since they have a near-perfect frame of reference right in front of their face.

Instead, we’ll add an object floating in front of one hand, and make the other hand a flashlight without adding a visible model at all.

Add a Floating Cube #

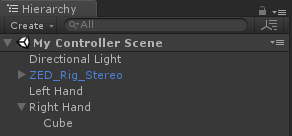

Right-click the Right Hand object and add a cube to it as a child by clicking 3D Object -> Add Cube.

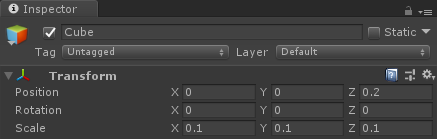

With the new cube selected, go to the Inspector and change its Z Position to 0.2 and its Scale to X = 0.1, Y = 0.1, Z = 0.1. This will make it hover 20 centimeters in front of the controller and shrink it down to a manageable size.

Adding a Flashlight #

Unlike the cube, we don’t need to represent the light as a visible 3D object if we don’t want to. Instead, we’ll add the proper components directly to the left hand object.

Select the Left Hand object

Add the ZEDLight script. When you add it, it will automatically add Unity’s Light component as well.

Change the Light component’s Type to Spot, the Color to a bright color of your choosing, and the Intensity to 10. This’ll make it a disco-style spotlight attached to your hand.

Run the Scene #

You’re ready to go! Run the scene, grab your controllers and play around. Move the cube around and behind real-world objects. Shine the light at surfaces near and far away, and with different angles. Change the color of the light to see how it looks. Congrats on becoming an augmented human being!